Universities’ value judgements about research are becoming ‘coupled’ to social media platforms as they compete for funding by demonstrating their influence beyond academia, an analysis suggests. The study, by researchers at the University of Cambridge, focused on how universities use social media in ‘impact’ case studies, which are a requirement of the Research Excellence Framework (REF). The REF is a periodic assessment of university research, run by UK higher education funding bodies; the current review ends next year.

Researchers examined 1,675 submissions from the previous exercise in 2014. They found that universities consistently use platform metrics – such as follower numbers, likes and shares – to claim that their research is making an impression. The authors describe this as a ‘naïve and problematic’ grasp of what both the data and ‘impact’ actually mean. But they suggest that in a competitive funding environment in which that meaning is in any case unclear, universities are reaching for social media metrics as easy-to-access measures of success that they hope might attract funding.

That process links the opaque, algorithm-driven value systems of platforms such as Facebook and Twitter to universities ‘evaluative infrastructures’. The study adds that this is just one example of how digital platforms are changing higher education, often unnoticed – and with uncertain consequences.

The study was undertaken by Dr Mark Carrigan and Dr Katy Jordan, at the Faculty of Education, University of Cambridge; Dr Carrigan has since become a lecturer at the Manchester Institute of Education.

“Social media platforms seem to be acquiring a role in how numbers manage higher education, as a sort of proxy for impact capacity,” Carrigan said. “We are starting to see academics seeking more followers and more shares not to support their research, but because it might be good for their careers.”

“Those metrics, however, result from social media companies manipulating content and user behaviour to maximise engagement with their platforms – a priority which then starts to become loosely coupled to universities’ own evaluative judgements about research.”

While the study in no way questions the importance of demonstrating impact as part of the REF assessment process, it does suggest that many universities have struggled since 2014 to understand the rather open-ended requirement. Impact is defined as: “an effect on, change or benefit to the economy, society, culture, public policy or services, health, the environment, or quality of life, beyond academia.” This will be worth 25% of the score awarded submissions in REF 2021.

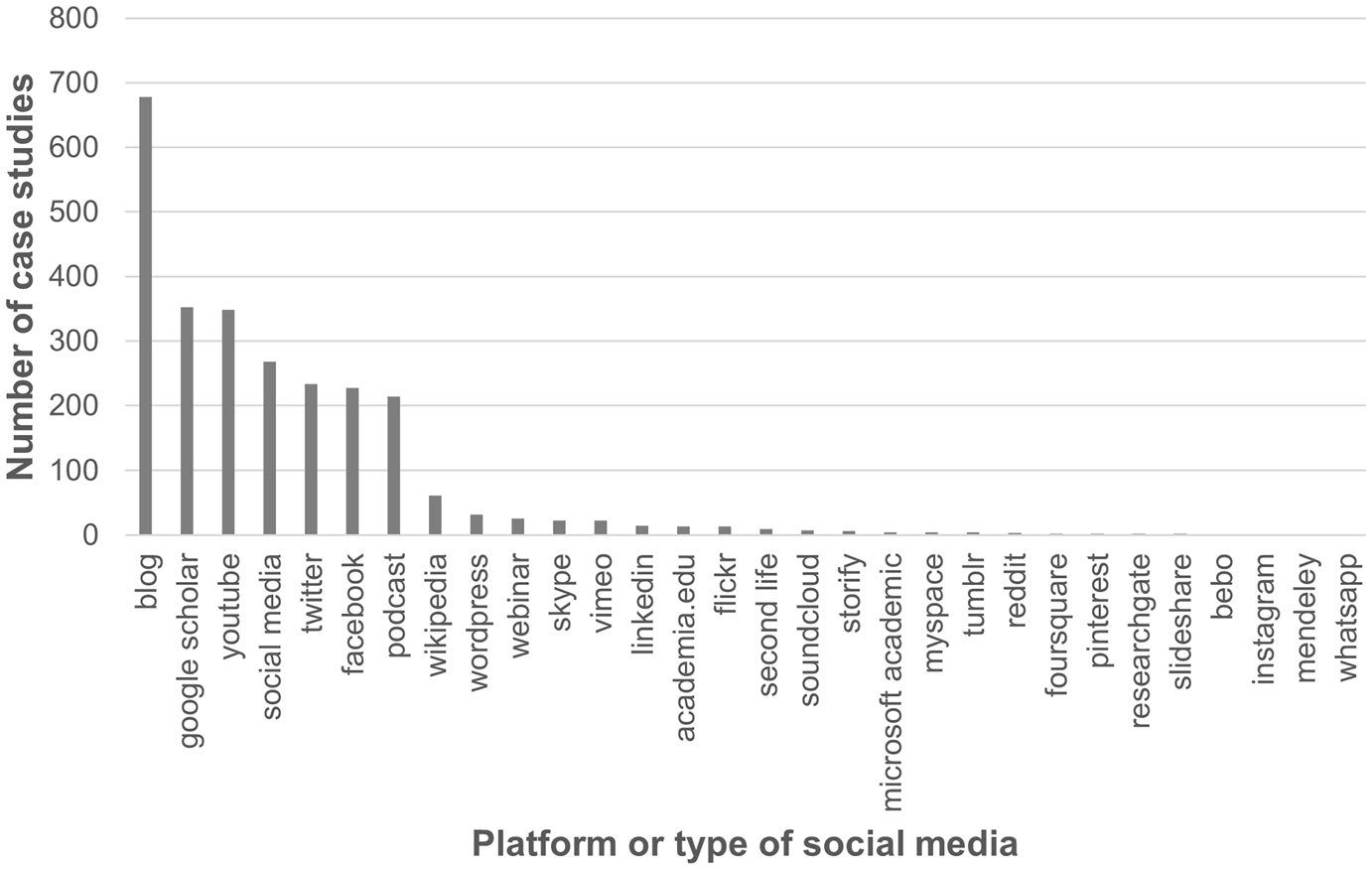

The researchers scanned 1,675 REF case studies from a public database for each of 42 terms relating to social media to identify patterns in the way social media was used. They also then analysed 100 randomly-selected case studies in closer detail.

Universities consistently mentioned social media in about 25% of their REF submissions. A handful of terms appeared far more than all the others: Google Scholar, YouTube, Facebook, Twitter, “podcasts”, “blogs” and (as a general term) “social media”. They appeared most in case studies from the arts and humanities (46.3%) and least in the biological and medical sciences (13.1%).

Although some references were entirely valid, a surprisingly high number of case studies attempted to claim impact by simply recording statistical information from social platforms. These included citations and research rankings from sources such as Google Scholar, and more generally follower counts, comments, views, downloads, likes, mentions and shares.

The researchers describe the fact that so many universities took this flawed approach as a symptom of institutional isomorphism: a phenomenon in which organisations imitate each other when dealing with uncertain goals, creating a false notion of ‘best practice’.

“The statistical data only represents social media activity; at best it’s preliminary to claiming real impact,” Carrigan said. “At the same time, it’s becoming part of what universities nevertheless consider effective digital engagement, and potentially gets absorbed into the business case for what researchers are expected to do.”

Because successful engagement on social media corresponds not to the needs of people affected by the research itself, but the requirements of companies running the platforms, the authors suggest that this ‘loose coupling’ may lead to various problems if it goes unaddressed.

Researchers from less-popular disciplines, for example, may struggle to meet institutional demands to build a following for their work. Perhaps more worryingly, social media often reproduces and intensifies various inequalities. Other research has, for instance, found that white males are less likely to be harassed online than other demographic groups, and these academics may therefore find it easier to be rewarded for high levels of engagement than other colleagues.

The study notes that this is just one example of how higher education has embraced digital platforms ‘at a dizzying rate’ – without necessarily noting the implications. In particular, the COVID-19 pandemic has witnessed a rapid “online pivot” towards remote learning. Platforms such as Teams and Zoom are now widely used for lectures and seminars, while others support learning management (Moodle), student engagement (Eventus) and alumni engagement (Ellucian). So far their wider effects on the culture and priorities of universities seem to have been largely overlooked.

The researchers point out that social media itself can be used profitably in research – for example to build networks with ‘end users’ of research projects – but argue that this potential should be more systematically integrated into academics’ professional skills training.

“Higher education social media policies need to catch up with the fact that this is going on,” Jordan said. “At the moment, the main incentive academics are offered for using social media is amplification: the idea that your research might go viral. We should be moving towards an institutional culture that focuses more on how these platforms can facilitate real engagement with research.”

The study is published in Postdigital Science and Education. This article was originally published by the Faculty of Education at the University of Cambridge.

At least universities are not trying to measure the value of research in the real world. Using social media platforms for this has problems, but no more so than the system of measuring worth by citations, which is in effect a count of likes by peers, mixed with dislikes. In 2013 I set up a blog to provide my views outside the limited world of peer reviewed journals and conference papers. In that I an express my opinion more quickly and more freely in a reviewed publication: https://blog.highereducationwhisperer.com/

LikeLike